Every marketer will tell you that testing is important. It’s how you improve over time. They’re right!

But it’s not quite that simple. What tests will make the most difference? And what if you have limited data? How will you ever get a meaningful result?

Let’s answer those questions.

Understanding the basics of marketing testing

The theory behind marketing testing is very simple. Change your marketing for some of your audience and see whether you get a different result. Whenever you get better results, you can roll those out to all relevant marketing. If you keep doing tests, you will get continuous improvement in results.

Common things to test

You can test pretty much anything you can think of, but it makes most sense to test things which you think will make a difference. For example:

On a web page:

- Does a different layout mean people spend longer on the page / are less likely to bounce back to where they came from?

- Does a simpler form with fewer fields mean more people sign up?

- What about moving the form further down the page?

- Does adding video make the page perform better?

For emails:

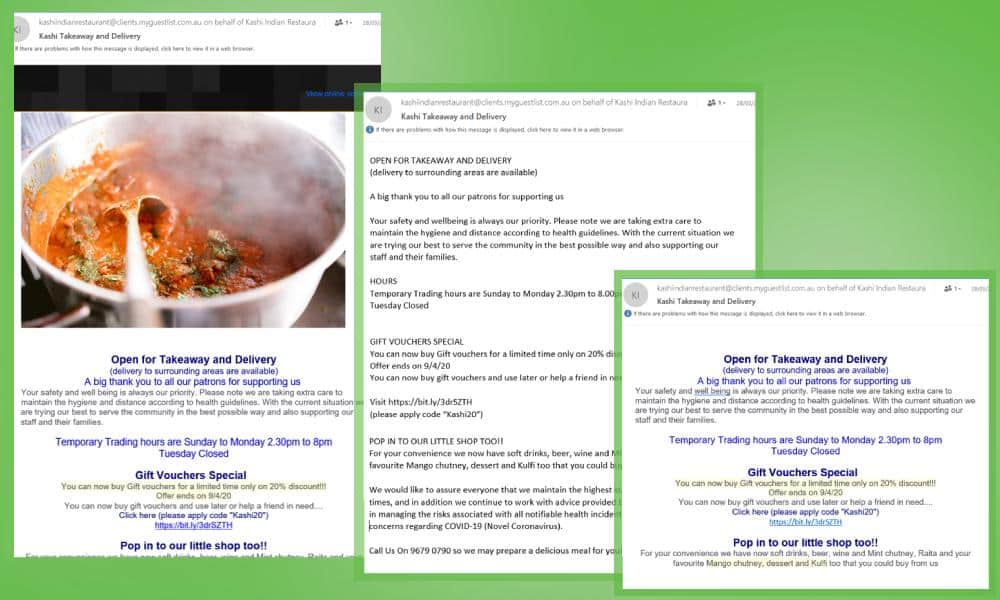

- Does a different subject line mean more people open the email?

- Do we get more clicks from rich text emails or HTML template emails?

- Is it better to have one article per email or links to multiple posts?

For any marketing:

- If we change the content to focus on a different benefit (save time writing blogs vs. get more traffic from optimised blogs), do we get more leads

- Should we include pricing or just get an enquiry first, then talk about pricing later in the sale?

- What about different price points? (Sometimes if you sell more at a lower price, you make more profit. Sometimes, you want to sell less at a higher price.)

Split testing versus multi-variate testing

If you change only one element, it’s called split testing or A/B testing.

For example, imagine we test 4 different images in a Facebook ad. The headline, the copy and the targeting stay exactly the same. The only thing we change is the image. This is split testing with 4 variations.

Now imagine we also come up with 3 different headlines and two versions of the ad copy.

This is multivariate testing and it’s much more complex. Stop to think for a moment about the number of options we just created.

4 images x 3 headlines x 2 copy versions = 24 different ads.

Statistical significance

This is a good time to talk about how you know when the result of a test is meaningful rather than random chance.

Imagine you toss a coin 4 times. You get 3 heads and 1 tails. Does that mean the coin is loaded? No, it’s just random chance.

If you toss it 400 times and get 300 heads and 100 tails? You can be pretty damn sure the coin is unevenly weighted.

But there’s a bit in the middle when the results are not so clear. What if you roll the coin 20 times and get 15 heads? Is that significant, or is it just the luck of the toss?

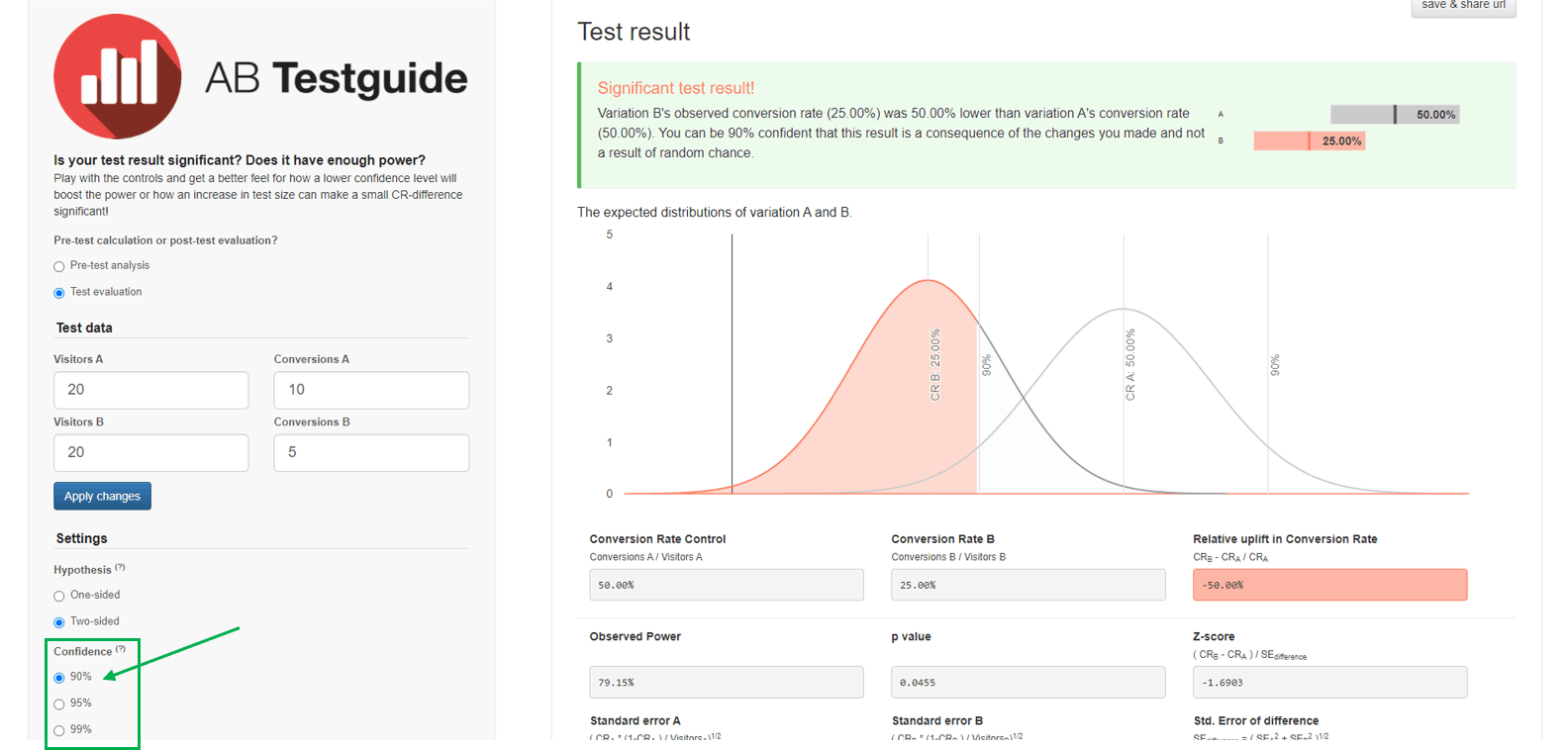

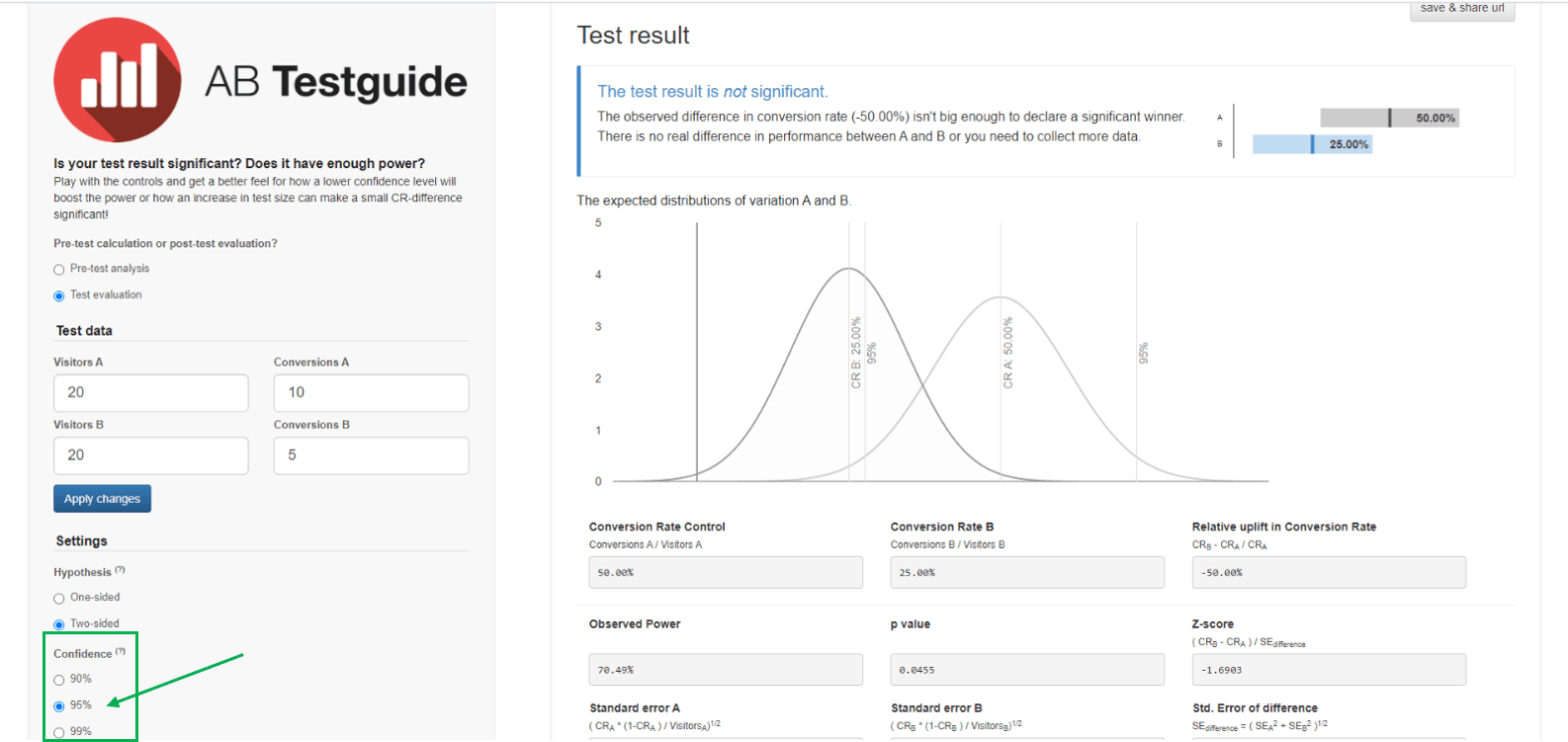

Tricky. I used this free A/B testing calculator to find out.

(Note: Version A is a completely unweighted coin which has an even number of heads and tails. Version B is my theoretical 15 heads from 20 toss)

What’s going on? Two different answers to the same question!

But look carefully at the bottom left where it talks about confidence. You can be 90% certain that the coin is loaded, but not 95% certain. That’s what statistical significance is all about. The general rule in marketing tests is that you want 95% confidence.

What happens when you have limited data for your marketing test?

Many tests don’t have answers as clear-cut as our coin toss. To get 95% confidence, you need a lot of data. And you may not have it.

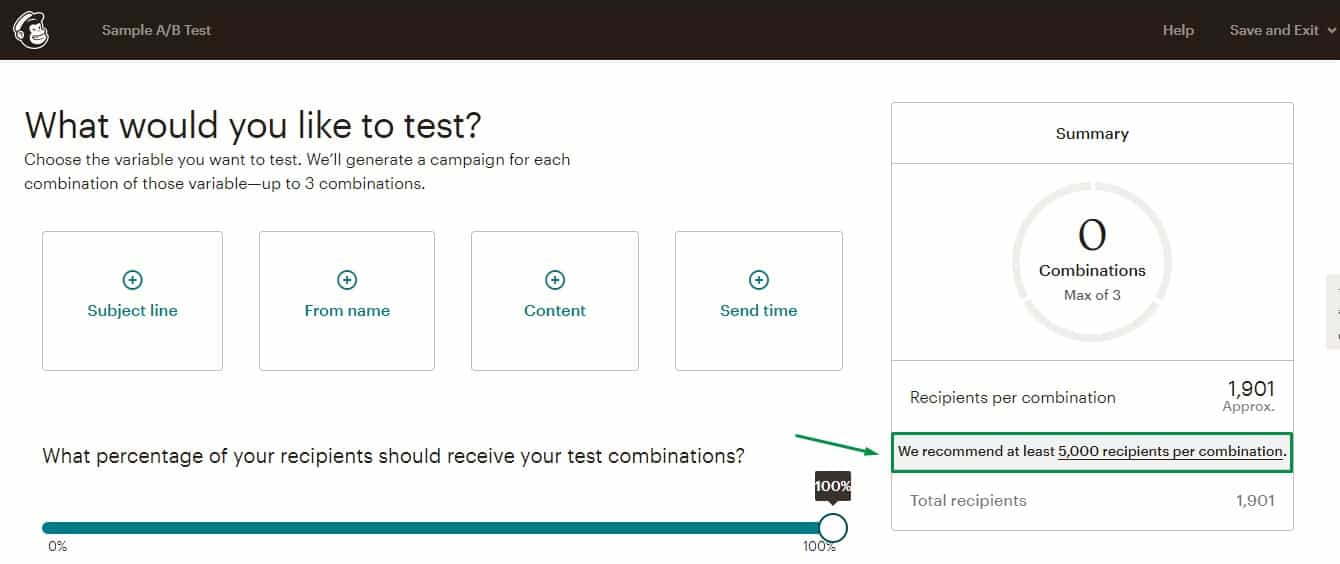

Look at this screenshot from MailChimp.

They ‘recommend at least 5,000 recipients per combination’. For the simple A/B test, they want a list of 10,000 people!

Now, you may have a contact database of 10,000 plus people. Most of us don’t.

So what can you do?

Tips for better marketing tests with limited data

1. Only test one thing at a time

Remember how multivariate testing meant there were lots of potential combinations to test? If you don’t have enough data, you can’t afford to share it between lots of options.

Keep tests simple!

2. Test things which might make a big difference

Don’t bother testing a little change like the colour of a button. Try something more radical.

- The whole layout of the page (especially good for home pages and pages which get a lot of traffic from external sites)

- The key benefit you focus on in copy and images

- Your navigation

3. Try some ‘fudge factors’ to increase the size of your data set

It’s all about compromising, but not compromising too much.

- See if there’s something related to your key goal which you can test as an indicator which has a bigger data set. For example, the number of people who visit a sales page is larger than the number who actually enquire or buy. So perhaps look at the average time people spend on the page, or the bounce rate, or whether they leave your site or go to another of your pages.

- For email, consider testing over multiple mailings. You can’t test subject lines, since they’ll be different for the next email. But you can test different templates, or single article vs multi-article emails.

- Accept that you’ll have to run tests for longer to get enough data for meaningful results. But keep an eye on exactly how long you’ll have to run them for. There’s a calculator for how long your test will need to run too.

4. Reduce the confidence level you require

We’re in the middle of a COVID pandemic. Worldwide, we’re rolling out vaccines that have effectiveness rates around 70%.

That’s a lot less than the 95% confidence gold standard for marketing tests! But nobody’s saying the vaccine isn’t effective. (They might be worrying about side effects, but that’s a different conversation.)

If I found a change to your website had a 70% chance of improving leads, would I implement it? Hell yes!

Most testing tools won’t let you set a confidence / significance level below 95%, but you can always collect your results and check them out in this calculator.

5. Use additional qualitative data

If you’re testing webpages, tools like Hotjar can help. The heatmaps can give an idea how people are interacting and help you spot ‘dead’ content which gets no attention.

You can also simply ask people. Try to choose people who are like your target market, not just random friends. And try to choose people who don’t know your site already. If you ask existing customers, they may prefer your current version simply because they are used to it and know how it works!

If that’s not enough ideas, you can also check out this article by the very smart and very practical Peep Laja. He’s got links to some more tools that it’s really not worth me copying over here.

If it’s all too much and you just want someone else to work out what you can do, let’s have a quick phone or Zoom call and I’ll see what I can suggest.